2023 Year in Review

Hey friends,

A short post of my 2023 in review. I'll go through the goals I set in 2022, some highlights for the year (e.g., diving into language modeling), goals for 2024, and some stats from 2023. If you have a review of your own, please reply to this with it—I'd love to read it.

I appreciate you receiving this, but if you want to stop, simply unsubscribe.

• • •

👉 Read in browser for best experience (web version has extras & images) 👈

2023 was a peaceful year of small, steady steps. There were no major lifestyle changes and I had the time and energy to explore new interests and focus on learning. Here’s my 2023 year in review, including goals, highlights, and statistics.

Goals

First, checking in on the goals I had set for myself last year:

- Write 26 posts 💪: I wrote 20 posts, hacked on five prototypes, and gave two talks. My writing covered topics such as project mechanisms, content moderation, ML patterns, experiments with LLMs, and LLM patterns. The last two were well received and gained the 🔥 tag (>20k views).

- Learn something new ✅: Though I learned a lot about Search on the job, I lacked the fundamentals. Grant Ingersoll and Dan Tunkelang’s excellent Search track helped to fill this gap. I also dove deep into content moderation and labeling queues for work, and spent many nights and weekends tinkering with LLMs.

- Work with a career coach 💪: GPT-4 has been effective at helping me reflect and consider various perspectives, and suggesting next steps. That said, I wonder if I should still get a human career coach, and am open to recommendations!

- Advance the industry ✅: I think my writing on LLMs has helped here. That said, I haven’t convinced enough people that lexical search should be part of RAG.

- Learn to snowboard ✅: My wife and I started on the blue slopes this week!

- Read fiction ❌: I was mostly occupied with the firehose of GenAI papers and advancements. Nonetheless, I was able to make time for some fiction, mostly sci-fi (Pandemic, Genome) and short stories (Ted Chiang, Cixin Liu).

- Meditate 60 minutes daily ❌: I didn’t do the full 60 minutes and funneled some time towards exercise and journaling instead.

I wished I could have found more time to meditate and read fiction but it’s been tough this year with all the learning I’ve had to do. Nonetheless, I’m happy with the progress on the other goals. Beyond the goals, I also had a few other highlights.

Exploring LLMs

With the step-change improvement from gpt-3.5-turbo, I started paying more attention to language modeling (LM) at the start of 2023. Thankfully, I had some familiarity with the subject, having previously applied LM models to recommender systems and experimented with gpt-3 for simple summarizations. To catch up, I did some experimentation and prototyping to learn the strengths and limits of autoregressive LMs. These prototypes included Discord bots, UIs for interacting with LLMs, Obsidian copilot based on lexical and embedding-based RAG, and finetuning a hallucination classifier on out-of-domain data.

This self-learning set me up for success at internal hackathons. Our first prototype, codenamed Dewey, did well (shout out to my awesome partner-in-crime Kelly N!) This gave me the courage to ask if I could start working on GenAI at least one day per week, and perhaps eventually transition to half-time. This request went better than expected…

Transitioning to a new role

Since then, my Charter has expanded to include working on GenAI initiatives across the org. This includes educating the working level and senior leadership, as well as figuring out how to deploy GenAI reliably and cost-effectively at scale. We’ve organized two fruitful hackathons: Two prototypes have been launched while two more are in progress.

Writing and speaking

Although I didn’t hit my goal of 26 posts, I’m proud of the writing I’ve done this year. Some pieces that have been impactful include:

- Patterns for Building LLM-based Systems & Products

- More Design Patterns For Machine Learning Systems

- Content Moderation & Fraud Detection - Patterns in Industry

- Evaluation & Hallucination Detection for Abstractive Summaries

Much of my writing is a byproduct of my work building ML systems, and in return it contributes to me being more effective at the work. Writing helps identify and fill my knowledge gaps, refine my thinking, and scale my sharing. (I learned that my leadership reads my writing and tweets 😱)

As usual, I found writing easier when I write with a specific person in mind (instead of a vague general audience). Some example pieces and their audience-of-one include:

- Dependency teams and Matching LLM patterns was written for mentees

- Labeling guides and Attention & Transformers was written for PMs

- Project mechanisms and Team mechanisms was written for tech managers

- LLM patterns and Summarization evals was written for senior leaders

I also had the opportunity to speak at the inaugural AI Engineer Summit. The energy was inspiring (my recap here) and I got the chance to connect and stay in touch with several practitioners as we figure out how to use this new technology in production.

In addition, I gave an invited keynote at the Amazon Machine Learning Conference. I took the chance to share the awesome work our team had done on session-based retrieval, contextual ranking, and cost-effective, just-in-time infrastructure. (I previously presented a public version at RecSys 2022 as a keynote too.)

Paper club

Together with a few friends, we started a paper club to read and discuss fundamental papers in the LM space. I believe we’ve learned more as a group than we could have individually, by pooling together our shared knowledge, experience, and questions. Here are one-sentence summaries for the earlier papers.

Angel investments

I made three angel investments in ML and tooling startups. This will likely be the volume of investments going forward. I’ve also become more selective, focusing on startups where I can provide the most value, mostly in the field of data and ML.

Health

I had a minor health scare (that involved bruising easily) that prompted me to reexamine my diet and exercise. My wife and I paid more scrutiny to our nutrition, such as reducing saturated fat and alcohol. We continued to keep sugar and processed food to a minimum.

Also, while I’ve been consistent with weight training, I’ve been neglecting cardio. Thus, I started forcing myself to jog at least twice a week. On challenging weeks, I make do with 45-minute brisk walks. This has improved my resting heart rate and VO2 max.

Goals for 2024

- Work: Continue shipping ML systems that serve customers at scale. Prototype on the side to test new tech (e.g., vision). Teach what I’ve learned.

- Writing: Write 6 good pieces. I previously focused on quantity (writing once a week) to build the habit. Now that it’s stable, I’ll invest more time into each piece, especially writes-ups that require more research or prototyping.

- Health: Minimize sugar, saturated fat, alcohol, and processed food in my diet. Exercise five days a week, with at least two days of cardio. 30 minutes of gratitude journaling and meditation first thing in the morning.

- Travel: Make time for two vacations (likely Las Vegas and Alaska).

- Snowboarding: Aiming to start hitting the black slopes by the end of the year.

- Learn something new: This is standard by now but I’ll keep it for tracking.

Mission statement (v5)

I wrote my first mission statement in 2013 and have been revising it every year or so. Here’s the latest iteration, written while on the flight back from SF after a particularly inspiring and energizing week. Fun fact: It started as a tweet and fits in 280 chars.

Statistics for 2023

Here’s a word cloud of my writing in 2023. The top themes (user, data, model) have been consistent though the focus on LLMs is new. (Word cloud from a previous year here.)

|

Word cloud of my writing in 2023

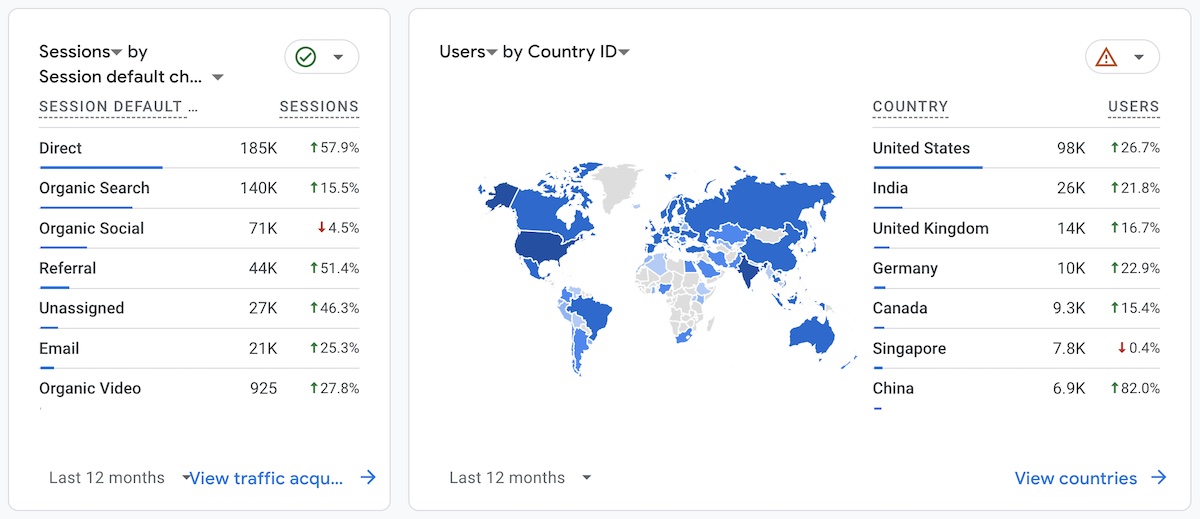

This site saw 259k unique visitors in 2023, an increase of 21% from 2022.

|

Number of unique visitors in 2023

The incoming channels were mostly direct, organic search, and organic social. The US is the largest source by far (and it looks like my audience in Singapore is tapering 😢).

|

Channel and geography of readers in 2023

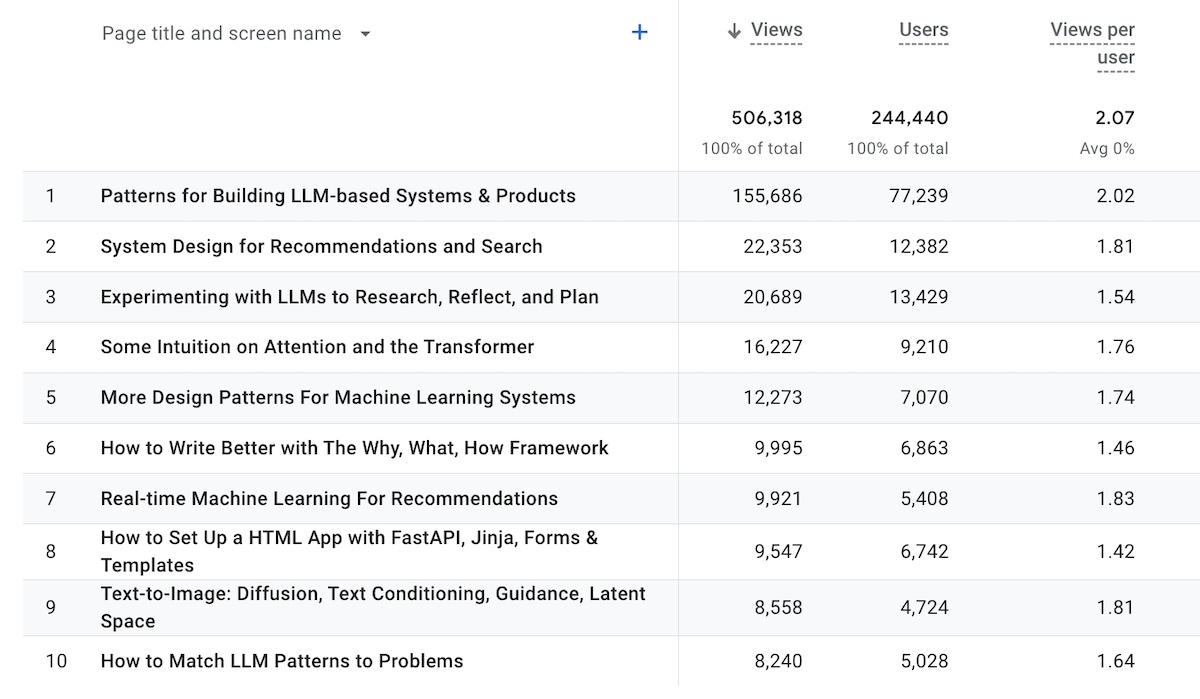

The top pages in 2023 were mostly broader pieces on patterns and system design. Old but gold pieces continue to be in the top 10, including: (i) recsys system design, (ii) writing why what how, and (iii) real-time retrieval.

|

Top 10 most visited pages in 2023

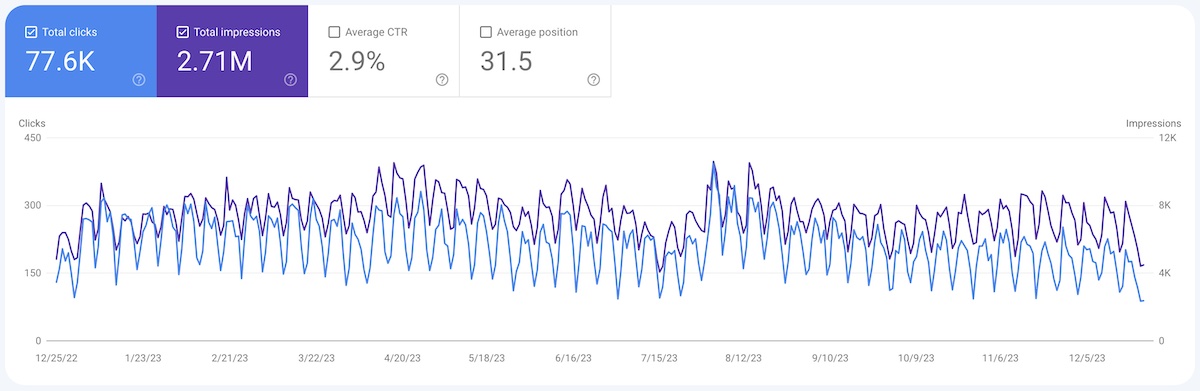

Clicks via Google search was mostly flat at 77.6k, though impressions increased from 2.14M to 2.71M (+27%). As a result, average CTR dropped from 3.6% to 2.9% (-19%).

|

Google search traffic in 2023

Miscellaneous social metrics

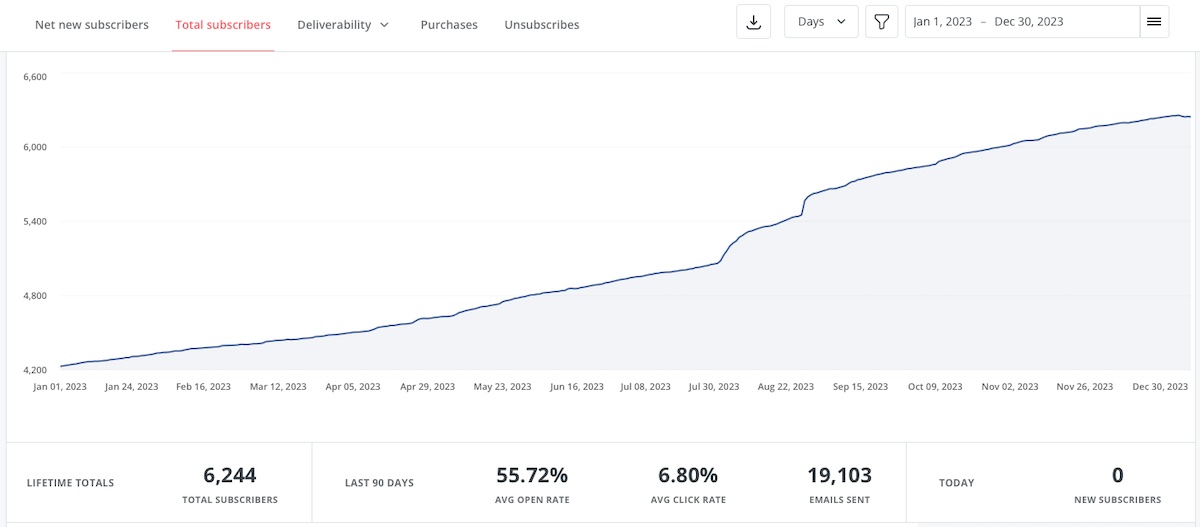

- Email: Subscriber count grew from 4.2k to 6.2k; 55.7% open rate, 6.8% click rate.

- Twitter: Follower count grew from 9.5k to 15.8k

- LinkedIn: Follower count grew from 27.1k to 32.7k

|

Email subscriber growth in 2023

Eugene Yan

I build ML, RecSys, and LLM systems that serve customers at scale, and write about what I learn along the way. Join 7,500+ subscribers!

Hey friends, I've been thinking and experimenting a lot with how to apply, evaluate, and operate LLM-evaluators and have gone down the rabbit hole on papers and results. Here's a writeup on what I've learned, as well as my intuition on it. It's a very long piece (49 min read) and so I'm only sending you the intro section. It'll be easier to read the full thing on my site. I appreciate you receiving this, but if you want to stop, simply unsubscribe. 👉 Read in browser for best experience (web...

Hey friends, Just got back from the AI Engineer World's Fair and it was a blast! I had the opportunity to give the closing keynote, as well as host GitHub CEO Thomas Dohmke for a fireside chat. Along the same lines, I've been thinking about how to interview for ML/AI engineers and scientists, and got together with Jason to write about the technical and non-technical skills to look for, how to phone screen, run interview loops, and debrief, and some tips for interviewers and hiring managers....

Hey friends, Recently a couple of friends and I got together to write about some challenges and hard-won lessons from a year of building with LLMs. One thing led to another and this is now published on O'Reilly in three sections: Tactics: Prompting, RAG, workflows, caching, when to finetune, evals, guardrails Ops: Looking at data, working with models, product and risk, building a team Strategy: "No GPUs before PMF", "the system not the model", how to iterate, cost We have a dedicated site...